“The whole story hinges on that kind of scaling,” said David Hayes, a physicist at the quantum computing company Quantinuum. “It’s really exciting to see that become a reality.”

Majority Rules

The simplest version of error correction works on ordinary “classical” computers, which represent information as a string of bits, or 0s and 1s. Any random glitch that flips the value of a bit will cause an error.

You can guard against errors by spreading information across multiple bits. The most basic approach is to rewrite each 0 as 000 and each 1 as 111. Any time the three bits in a group don’t all have the same value, you’ll know an error has occurred, and a majority vote will fix the faulty bit.

But the procedure doesn’t always work. If two bits in any triplet simultaneously suffer errors, the majority vote will return the wrong answer.

To avoid this, you could increase the number of bits in each group. A five-bit version of this “repetition code,” for example, can tolerate two errors per group. But while this larger code can handle more errors, you’ve also introduced more ways things can go wrong. The net effect is only beneficial if each individual bit’s error rate is below a specific threshold. If it’s not, then adding more bits only makes your error problem worse.

As usual, in the quantum world, the situation is trickier. Qubits are prone to more kinds of errors than their classical cousins. It’s also much harder to manipulate them. Every step in a quantum computation is another source of error, as is the error-correction procedure itself. What’s more, there’s no way to measure the state of a qubit without irreversibly disturbing it — you must somehow diagnose errors without ever directly observing them. All of this means that quantum information must be handled with extreme care.

“It’s intrinsically more delicate,” said John Preskill, a quantum physicist at the California Institute of Technology. “You have to worry about everything that can go wrong.”

At first, many researchers thought quantum error correction would be impossible. They were proved wrong in the mid-1990s, when researchers devised simple examples of quantum error-correcting codes. But that only changed the prognosis from hopeless to daunting.

When researchers worked out the details, they realized they’d have to get the error rate for every operation on physical qubits below 0.01% — only one in 10,000 could go wrong. And that would just get them to the threshold. They would actually need to go well beyond that — otherwise, the logical qubits’ error rates would decrease excruciatingly slowly as more physical qubits were added, and error correction would never work in practice.

Nobody knew how to make a qubit anywhere near good enough. But as it turned out, those early codes only scratched the surface of what’s possible.

The Surface Code

In 1995, the Russian physicist Alexei Kitaev heard reports of a major theoretical breakthrough in quantum computing. The year before, the American applied mathematician Peter Shor had devised a quantum algorithm for breaking large numbers into their prime factors. Kitaev couldn’t get his hands on a copy of Shor’s paper, so he worked out his own version of the algorithm from scratch — one that turned out to be more versatile than Shor’s. Preskill was excited by the result and invited Kitaev to visit his group at Caltech.

“Alexei is really a genius,” Preskill said. “I’ve known very few people with that level of brilliance.”

That brief visit, in the spring of 1997, was extraordinarily productive. Kitaev told Preskill about two new ideas he’d been pursuing: a “topological” approach to quantum computing that wouldn’t need active error correction at all, and a quantum error-correcting code based on similar mathematics. At first, he didn’t think that code would be useful for quantum computations. Preskill was more bullish and convinced Kitaev that a slight variation of his original idea was worth pursuing.

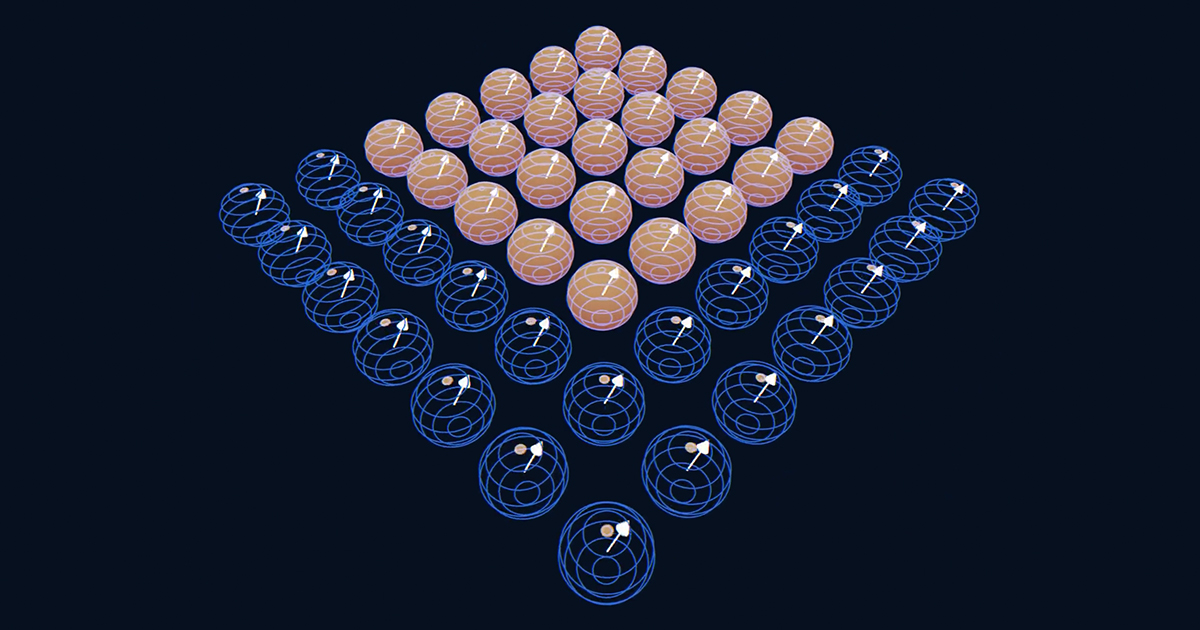

That variation, called the surface code, is based on two overlapping grids of physical qubits. The ones in the first grid are “data” qubits. These collectively encode a single logical qubit. Those in the second are “measurement” qubits. These allow researchers to snoop for errors indirectly, without disturbing the computation.

This is a lot of qubits. But the surface code has other advantages. Its error-checking scheme is much simpler than those of competing quantum codes. It also only involves interactions between neighboring qubits — the feature that Preskill found so appealing.

In the years that followed, Kitaev, Preskill and a handful of colleagues fleshed out the details of the surface code. In 2006, two researchers showed that an optimized version of the code had an error threshold around 1%, 100 times higher than the thresholds of earlier quantum codes. These error rates were still out of reach for the rudimentary qubits of the mid-2000s, but they no longer seemed so unattainable.

Despite these advances, interest in the surface code remained confined to a small community of theorists — people who weren’t working with qubits in the lab. Their papers used an abstract mathematical framework foreign to the experimentalists who were.

“It was just really hard to understand what’s going on,” recalled John Martinis, a physicist at the University of California, Santa Barbara who is one such experimentalist. “It was like me reading a string theory paper.”

In 2008, a theorist named Austin Fowler set out to change that by promoting the advantages of the surface code to experimentalists throughout the United States. After four years, he found a receptive audience in the Santa Barbara group led by Martinis. Fowler, Martinis and two other researchers wrote a 50-page paper that outlined a practical implementation of the surface code. They estimated that with enough clever engineering, they’d eventually be able to reduce the error rates of their physical qubits to 0.1%, far below the surface-code threshold. Then in principle they could scale up the size of the grid to reduce the error rate of the logical qubits to an arbitrarily low level. It was a blueprint for a full-scale quantum computer.